API access works for low to moderate use, but self-hosting becomes cheaper when there are a lot of users. Here's the problem: generative AI and LLM aren't the same thing, and treating them like synonyms can lose you time and budget when you're evaluating solutions.

Consider generative AI as a category, and LLMs as a tool inside it. Whether your AI investment yields results or turns into costly shelfware depends on knowing which technology addresses specific issues.

This article clarifies what separates LLM vs. generative AI, when each matters, and how to choose the right approach for your use case. We'll skip the 101 explanations and go right to what you really need to know because we're presuming you already understand the fundamentals (what AI is, why it's useful).

Generative AI refers to systems that create original content: code, music, video, pictures, or text. Synthesis is the defining feature. These models produce previously unheard-of outputs after learning patterns from enormous datasets.

Here's what falls under generative AI:

The business value is the automation of creative and knowledge work. Marketing departments create material more quickly. Design teams may create visual prototypes without having to hire freelancers. Engineers use AI to debug programs. However, distinct model designs are needed for each use scenario.

What matters for you: Generative AI is the umbrella term. When someone offers you "generative AI," ask which type. Text generation is not the same as image creation, and combining the two makes buying selections more difficult.

Large language models are generative AI systems designed exclusively for text. They predict the next word, phrase, or sentence using patterns learnt from billions of text examples. They may create coherent essays rather than merely phrase fragments because of the architecture (transformer neural networks), which enables them to keep context throughout lengthy papers.

They are "large" because of the number of parameters. These days, LLMs have hundreds of billions or even trillions of parameters adjustable weights that dictate how the model reads input and produces output. More parameters generally improve quality but increase computational costs.

LLMs excel at:

The difference between generative AI vs. LLM is scope. Image, audio, and video models are examples of generative artificial intelligence. LLMs only handle text (but recent models, like as the GPT-4, include vision capabilities, making them multimodal).

For your purposes: You should consider LLMs if the output you require is text-based (content, dialogue, code). A whole different generative AI model is required if you require music or graphics.

You'll hear foundational model used alongside LLM. Here's the distinction.

A foundational model is a large-scale AI system pre-trained on broad datasets and adaptable to multiple downstream tasks. GPT-4, BERT, and DALL-E are all fundamental models since they begin with generic training and then fine-tune for specific applications (question answering, image production, sentiment analysis).

Foundation models vs LLMs: Not all foundational models are LLMs, but all LLMs are foundational models. LLMs are language-focused. Other foundational models may deal with audio (like Whisper for speech recognition) or vision (like CLIP, which links text and images).

Why this matters: Foundational models reduce development time. Rather than building a model from scratch for each use case, you begin with a pre-trained fundamental model and fine-tune it with your own data. This reduces expenses and shortens time-to-market.

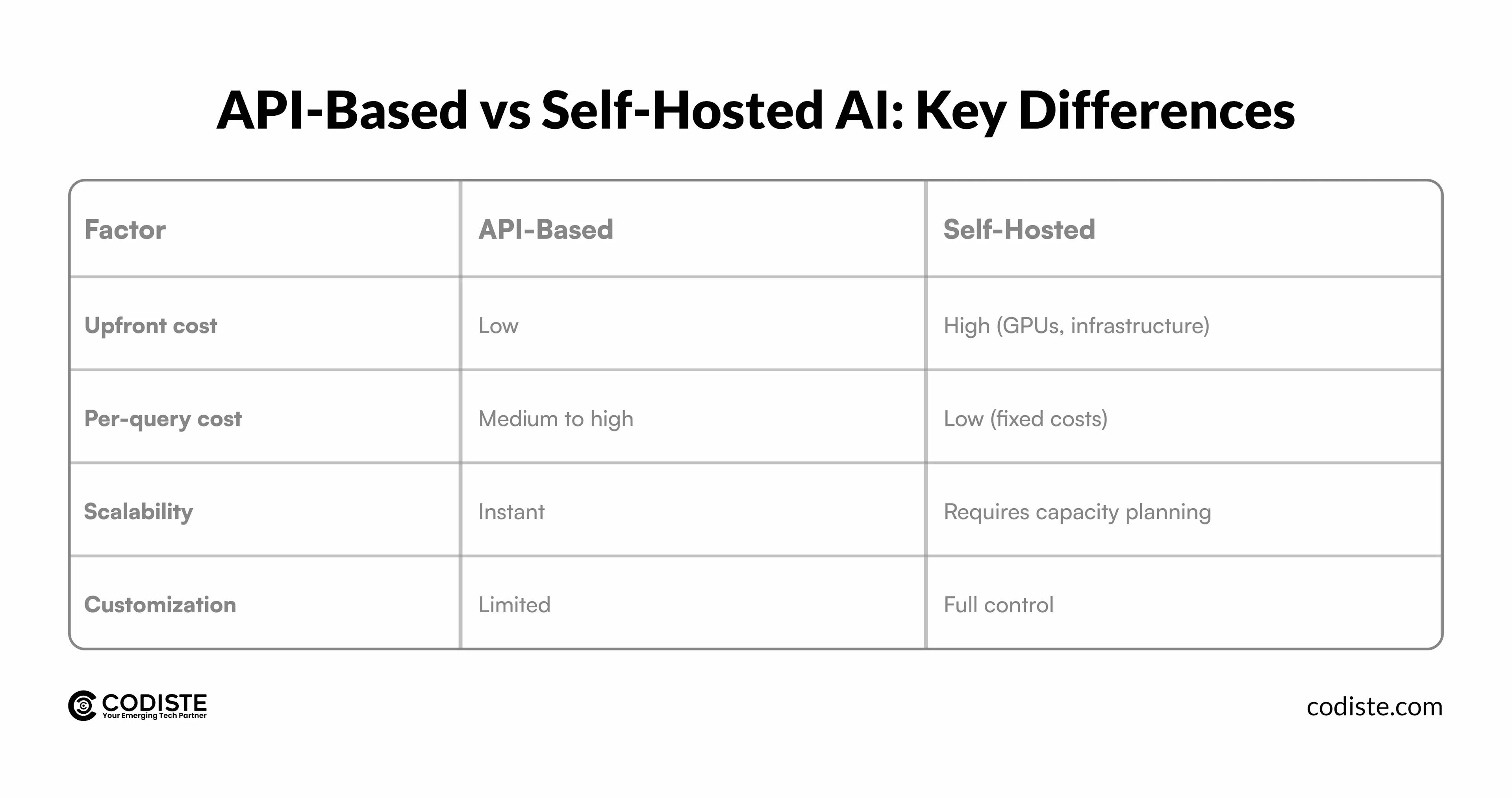

The tradeoff is dependency. Instead of creating their own models, the majority of businesses use API access to models like GPT or Claude. Although it is less expensive, there are hazards involved, such as restricted flexibility, vendor lock-in, and data privacy issues. Self-hosting gives you control but requires significant infrastructure investment.

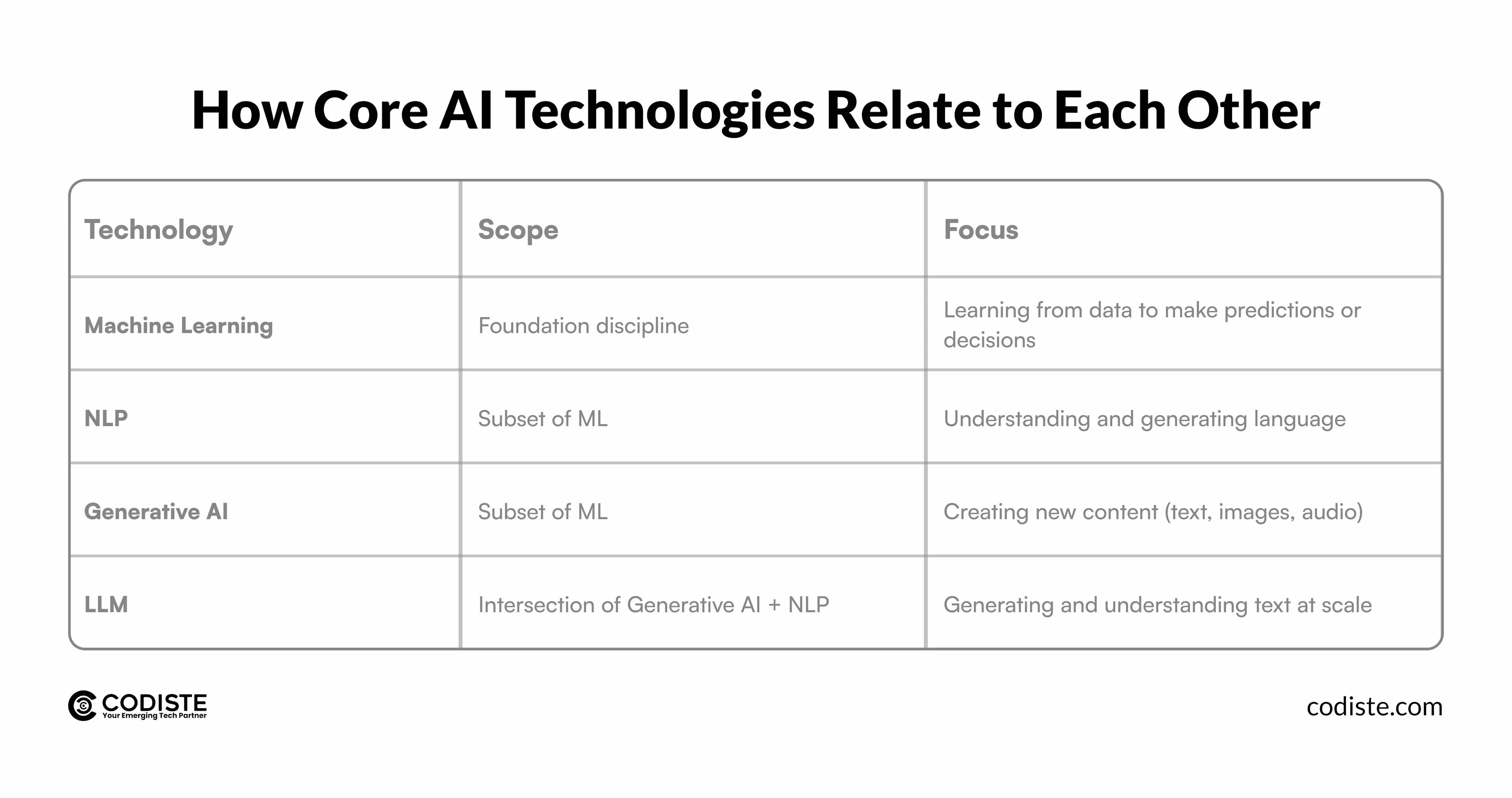

Let's clear up the hierarchy because understanding LLM vs NLP vs generative AI prevents confusion when evaluating vendors or hiring talent.

Here's the relationship:

Why this matters for hiring and vendor evaluation: You don't absolutely require a generative AI specialist if you need sentiment analysis or document classification; instead, you need NLP knowledge. If you need content automation, hire for LLM experience. If you require visual design, check for image production specialists.

The distinction between generative AI and LLM is shifting thanks to multimodal AI, systems that process and generate multiple data types (text, images, audio) within a single model.

Multimodal AI vs LLM: Traditional LLMs handle text only. GPT-4 Vision and Google Gemini are examples of multimodal models that can understand images, answer questions about them, and generate coordinated text responses. Some systems include text, image, and audio creation capabilities.

Why this matters: Real-world problems rarely exist in a single format. Customer support inquiries contain screenshots. Product designs include sketches and written specifications. Marketing initiatives require both pictures and copy. Multimodal AI manages these workflows without switching tools.

Practical applications:

The tradeoff is complexity. Multimodal models are more difficult to train, need a wider range of information, and are more expensive to implement. However, the integrated method eliminates coordination overhead and saves time if your operations incorporate several types of content.

There's another category emerging: agentic AI. This is where LLM vs generative AI vs agentic AI gets interesting.

Agentic AI refers to autonomous systems that plan, decide, and execute tasks independently. Agentic AI takes the initiative in contrast to LLMs, which react to commands. It simplifies difficult objectives, collects data, makes use of outside resources, and adjusts in response to input.

The difference:

Example: An agentic AI system might use an LLM to draft an email, call an API to check inventory levels, update a CRM, and schedule a follow-up, all without human intervention.

The business case is end-to-end automation. Rather of employing AI to assist with tasks, you use agentic AI to do them autonomously. This applies to data analysis, research synthesis, client onboarding, and IT problems.

The catch: Autonomous systems can make mistakes at scale. In the absence of appropriate safeguards, they may carry out inadvertent activities. Fail-safes, monitoring, and testing become crucial.

One of the most underrated questions in the generative AI vs. LLM debate is cost. How do expenses grow as these technologies are deployed at the enterprise level?

Cost curves generative AI vs LLM large scale usage differ based on model type and deployment method.

LLMs are expensive to train but relatively affordable to use via APIs. Cloud providers charge per token (input and output lengths). This is suitable for moderate use. At scale, those per-token fees add up. A high-traffic chatbot can cost thousands per month.

Although it takes an initial investment in GPUs, equipment, and engineering expertise, self-hosting an LLM lowers per-query costs. Self-hosting is typically cost-effective within 6 to 12 months for predictable, high-volume workloads.

Other generative AI models (image or video generation) are computationally intensive per request, so API costs tend to be higher per output. Self-hosting requires even more specialized hardware.

For most startups and mid-sized companies, API access makes sense early. However, self-hosting or hybrid techniques (using open-source models like LLaMA or Mistral) become appealing once usage surpasses a certain threshold.

The key question: What does our cost curve look like at 10x scale? Run the numbers before you're locked into pricing that doesn't scale with your business.

How do you choose between generative AI vs. LLM for your project? Start by defining the output you need.

Decision framework:

The trend is toward composable systems: Agentic layers for orchestration, image generators for visuals, and LLMs for language. This modular strategy allows for flexibility without requiring a single model.

Ready to move beyond the prompt and build a proprietary AI engine? Contact Codiste today to discuss your llm development or generetive ai development roadmap. Whether you need to optimize your large language models for cost at scale or deploy a multi-agent agentic AI system, our engineers provide the technical depth required to win in 2026.

Every great partnership begins with a conversation. Whether you're exploring possibilities or ready to scale, our team of specialists will help you navigate the journey.