The transition from static LLM chats to autonomous AI agents hinges on one thing: how effectively your model can interact with the real world. While the Model Context Protocol (MCP) has emerged as the "USB-C for AI," simply connecting an ML model to a server isn't enough for production-grade reliability.

If you are an architect or developer tasked with ML models and MCP server integration, you are likely dealing with the "tool budget" dilemma, latency spikes, and the looming shadow of prompt injection. Integrating these systems requires more than just code; it requires a strategic framework that balances model autonomy with strict operational guardrails. This guide outlines the essential best practices for MCP server development that ensure your AI agents are as secure as they are capable.

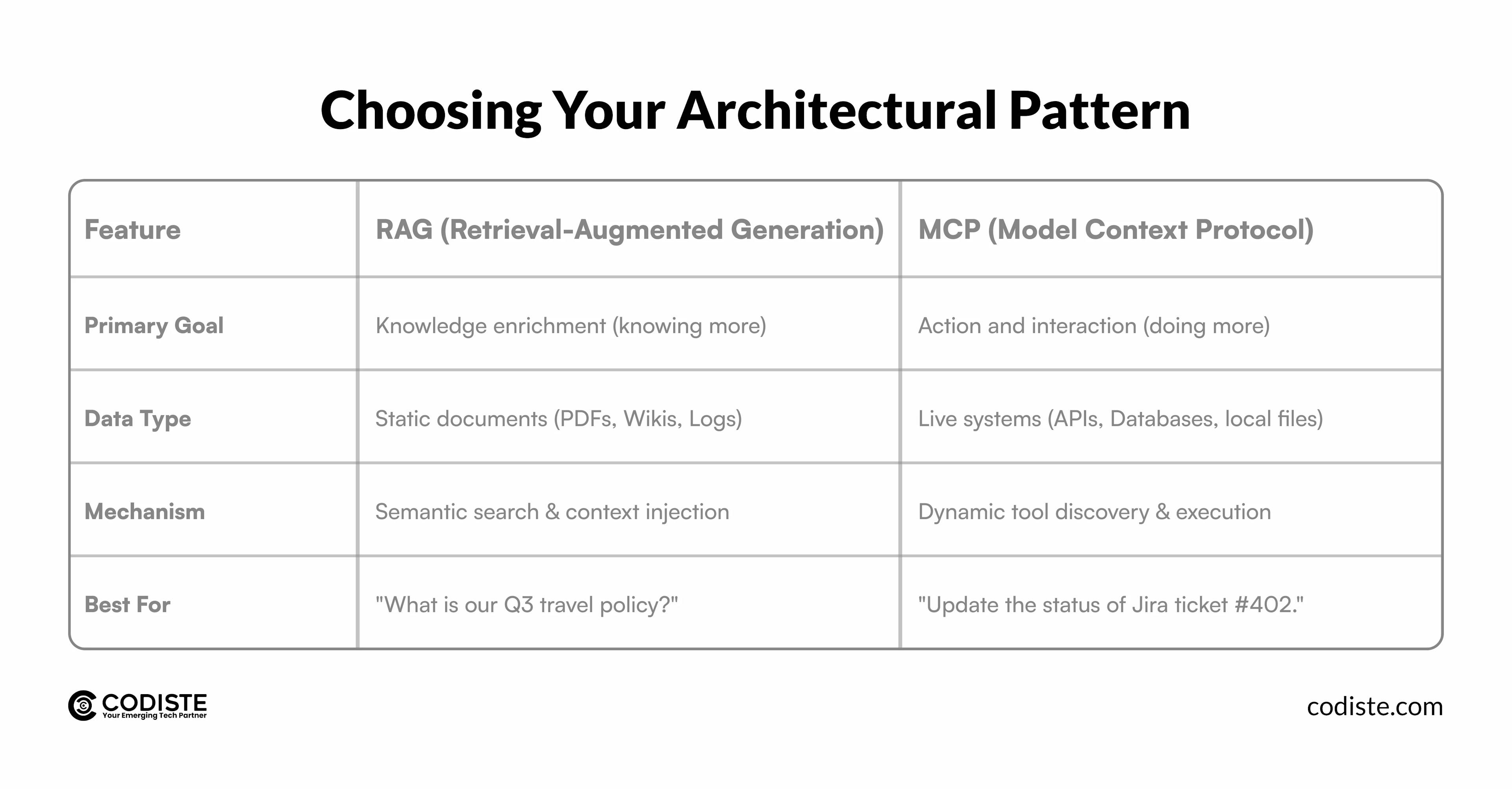

Before diving into the code, you must determine if MCP server integration is actually the right tool for your specific ML use case. A common mistake in machine learning development is treating MCP and RAG (Retrieval-Augmented Generation) as interchangeable.

In a modern MCP client-server architecture, the most sophisticated agents use both. They use RAG to "read" the manual and MCP to "execute" the task. If your ML model needs to pull real-time data or modify a state, integrating ML models with your MCP server is the non-negotiable path forward.

One of the most significant bottlenecks in LLM tool calling is context window bloat. When an agent is connected to an MCP server with dozens of tools, the model must process the definitions of every single tool before it even reads the user’s request.

Instead of exposing fifty individual API endpoints as fifty tools, follow the "Thin Server, Smart Client" rule. Group related functions into high-level tools or use a "search_tools" function. This allows the agent to discover only the relevant tools it needs for a specific sub-task, significantly reducing token consumption and latency.

Anthropic’s Anthropic MCP Standard suggests that for complex data manipulations like downloading a CSV and calculating a trend it is more efficient to give the agent a code execution tool via MCP rather than making multiple sequential tool calls. This keeps the intermediate "messy" data out of the LLM’s context window.

Security is the biggest hurdle in machine learning model deployment within an agentic framework. Because an MCP server can technically execute any code it's given, it presents a unique "confused deputy" risk where the model is tricked into using its elevated permissions to perform a malicious act.

Never assume a tool call is safe just because it came from your own LLM.

If you are working in regulated industries (FinTech, HealthTech), your MCP server development must include centralized logging. Every tool call, the arguments passed by the LLM, and the raw output returned by the system should be captured in an immutable audit trail. This is essential for debugging non-deterministic model behavior and meeting regulatory requirements.

For teams focusing on ML development, the ability to pull specialized models from the Hugging Face ecosystem into an MCP workflow is a game-changer. Whether you’re using a specialized BERT model for NER or a Flux model for image generation, the integration follows a specific pattern.

Hugging Face now provides a dedicated MCP server that connects your assistant directly to the Hub.

While the protocol is language-agnostic, Python MCP servers are the industry standard for ML workflows due to the ecosystem's rich library support (PyTorch, Scikit-learn).

ML models are inherently "noisy." When your MCP server calls an ML model, the output might not always match the expected JSON schema.

Pro Tip: Utilise "Prompts as Macros." To process an image, the agent should use a single "Prompt Template" on the MCP server that manages the chain of logic rather than calling five different tools. This reduces the number of round-trips between the client and server.

The successful integration of ML models with your MCP server is what separates a simple chatbot from a truly autonomous agent. By focusing on MCP server security, optimizing your tool calling budget, and adhering to the Anthropic MCP Standard, you create a system that is not only powerful but also predictable and secure.

As the ecosystem shifts toward managed MCP server services, the foundational work you do today in MCP server development will be the competitive advantage that allows your AI to navigate complex business workflows with precision.

Ready to scale your AI capabilities?

At Codiste, we specialize in high-performance ML models + MCP server integration and custom agentic frameworks. Whether you're looking to modernize your legacy APIs for the AI era or build a bespoke machine learning development pipeline, our team is here to help.

Contact Codiste today for a technical consultation on your MCP architecture.

Every great partnership begins with a conversation. Whether you're exploring possibilities or ready to scale, our team of specialists will help you navigate the journey.